Today, I had an interesting discussion with a colleague on the possible attack vectors on the communication between a Relying Party application and ACS (Azure Access Control Service, also known as Windows Azure Active Directory Access Control). The discussion focussed on the extend to which the RP can be sure that he is in fact communicating with the STS (Secure Token Service, which in this scenario is ACS). The discussion was a bit blurred by some other issues that arose so we didn’t really get to the heart of the matter, but we were both left with a nagging concern that communication was not as secure as we would want it to be.

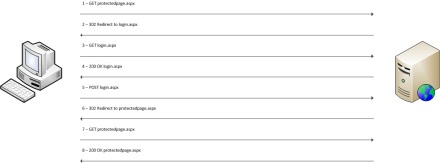

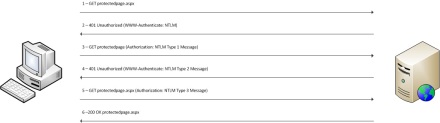

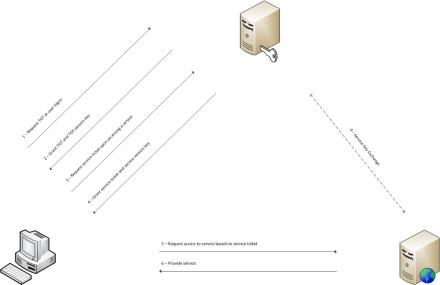

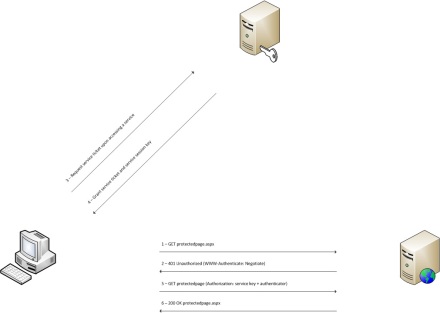

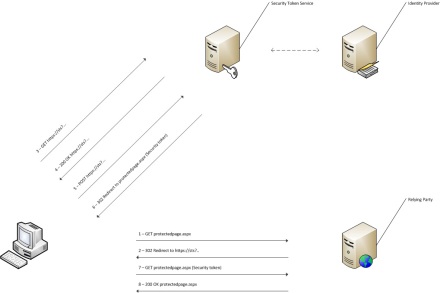

Now it’s never a good idea to keep a factual disagreement undecided, and when it comes to security there’s all the more reason to figure out what’s really going on. So, to set the background: we have an RP that lets its users authenticate with ACS (which in turn uses LiveID as Identity Provider). We consciously did not configure the tokens to be encrypted, so we only have them signed by ACS. This feature cannot be disabled (or I did not find the button for it) and that makes good sense, too. Since the communication between the RP and the STS all flows through the client (see this post for an extensive description), we want to make sure that the client is not able to tamper with the SAML token that it gets from the STS to pass on to the RP. There’s no viable scenario in which you would want to enable clients fiddling with the tokens, so not being able to disable token signing is a good thing as far as I’m concerned.

OK, so token signing is obligatory. The way this actually happens is – broadly – as follows if you don’t customize your ACS namespace: ACS generates a X.509 certificate that is used for signing the tokens. Upon signing a token, it uses this certificate’s private key to do the signing, and then embeds its own public key in the RequestSecurityTokenResponse that flows from ACS to the client and then to the RP. The RP then extracts this public key from the reponse, and uses it to validate the signed SAML token that is also part of this message.

So the question becomes: what is keeping a client from creating its own X.509 certificate on the fly, create its own SAML token and sign it with the private key of the roque certificate, and embed the certificate’s public key in the response? How will the RP, upon extracting the public key, detect that this is not the public key from the STS? Of course, the normal way of doing this would be to place the public key of the legitimate signing certificate in the Trusted Person Certificate Store on the client’s host (assuming it’s Windows). But that’s not how it is configured if you just use the Identity and Access Tool to register your app as an RP at your ACS namespace. In fact, the relevant part of your web.config will look like this:

<system.identityModel>

<identityConfiguration>

<audienceUris>

<add value="[your URI]" />

</audienceUris>

<issuerNameRegistry type="System.IdentityModel.Tokens.ConfigurationBasedIssuerNameRegistry, System.IdentityModel, Version=4.0.0.0, Culture=neutral, PublicKeyToken=b77a5c561934e089">

<trustedIssuers>

<add thumbprint="[a certificate thumbprint]" name="[a friendly name]" />

</trustedIssuers>

</issuerNameRegistry>

<certificateValidation certificateValidationMode="None" />

</identityConfiguration>

</system.identityModel>

As this snippet shows, the certificateValidationMode is None, meaning that you can put anything you like in whichever Certificate Store on the client host, but your app will not hit that store to validate anything. Of course, we can always switch the certificateValidationMode to PeerTrust or PeerOrChainTrust and add the public key of the signing certificate to the Trusted Person Certificate Store. But if that would be the only way to be sure that the token does indeed come from the STS, it would for example mean that, first of all, the default configuration as done by the Identity and Access Tool would leave you severely unprotected, and second of all it would mean that there’s no way to get any level of issuer assurance in, for example, an Azure Websites scenario.

Luckily, it turns out that things are not as bad as they seem, and the key to this is the <trustedIssuers> element in the snippet above. As you can see, this element contains a collection of certificate thumbprints. The one element that we have in our web.config in this case is placed there by the Identity and Access Tool when I first set up my application to use ACS as STS, and it is the thumbprint for the certificate that ACS uses to sign my tokens. So now, when the RP receives a RequestSecurityTokenResponse from the client and extracts the public part of the certificate from that message, it first validates the certificate by comparing its thumbprint to the one in the web.config. If the client forged the response with his own certificate, the thumbprint in the web.config will not match with the certificate used, and authentication does not occur. The class responsible for this is the ConfigurationBasedIssuerNameRegistry, which holds a registry of all valid issuers based on the items in the <trustedIssuers> element. This class is referenced in the <issuerNameRegistry> element as the class to use for this purpose.

Of course, there’s always a theoretical possibility that a second certificate does resolve to the same thumbprint. After all, the output space for thumbprints is limited and in fact smaller than the output space for certificate keys. But the change that an attacker is able to willingly create a certificate that results in the same thumbprint as the ACS certificate is extremely small. But hey, if the risk is still too big for your comfort, you can always take the Trusted Persons Certificate Store route on top of all this :).

UPDATE: Today the discussion with my colleague continued for a bit. He made the valid point that the above behavior (i.e. authentication fails if the thumbprints don’t match) is not intended as a security feature. Instead, what happens here is that the WIF framework wants to determine the name of the issuer based on the incoming public key. It does this by calling into the ConfigurationBasedIssuerNameRegistry, which will return an empty string if the incoming public key does not resolve to a known thumbprint. Further down the line, WIF throws an exception if the issuer name is null or empty. This means that the availability of a matching thumbprint is not really what is evaluated here; it’s just that it happens to function as the “bridge” between the public key and the issuer name. This becomes a real concern given the possibility to provide your own implementation of the IssuerNameRegistry instead of going with the default configuration-based variant. Now if that custom implementation happens to ignore the thumbprint while resolving the certificate into an issuer name, the RP can no longer be sure that the incoming token actually came from the STS.

So, to conclude: if there’s really no possibility to do proper certificate validation, you can be sure of untampered communication with the STS, but only as a side effect of resolving the certificate to a name through the thumbprint. If at all possible, it’s a recommended practice to set the certificateValidationMode to at least PeerTrust and to place the token signing certificate’s public key in the Trusted Persons Certificate Store.